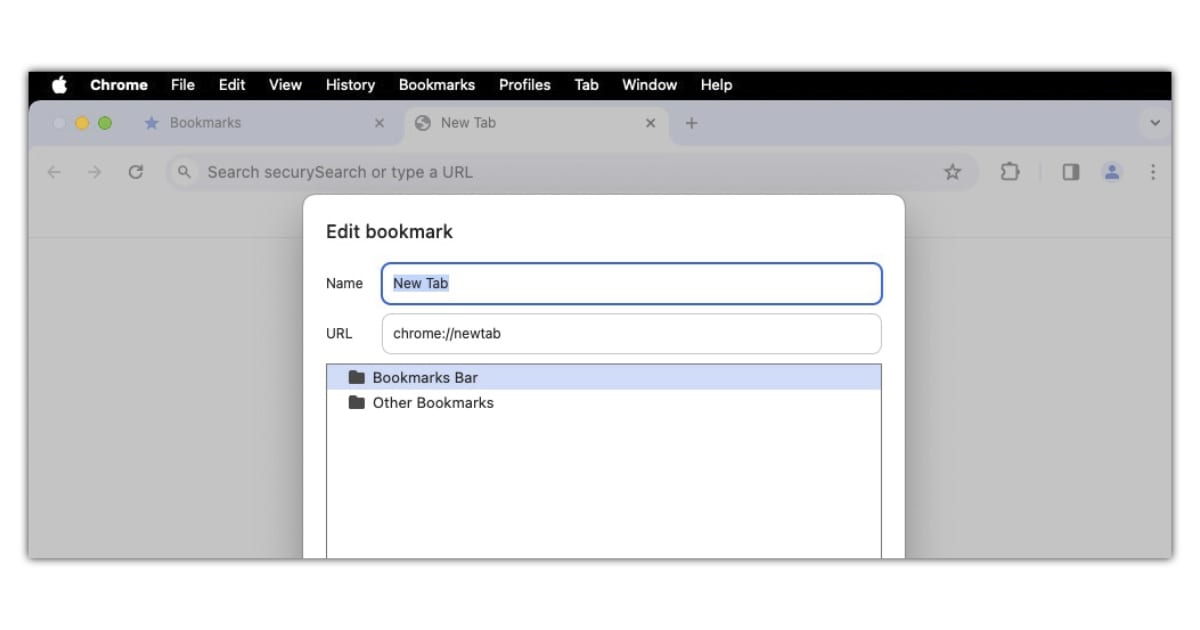

Installing Bookmarklets

Simple Instructions

Ensure the Bookmarks Bar is Visible:

- If you don't see the bookmarks bar, press CTRL + SHIFT + B to display it.

- Alternatively, click the three dots in the upper right corner, hover over "Bookmarks," and check "Show bookmarks bar."

Add the Bookmarklet:

- Visit the web page where the bookmarklet is offered as a link.

- Drag and drop the bookmarklet link to the bookmarks bar.

- If you want to create a bookmarklet from scratch:

- Right-click on the bookmarks bar.

- Select "Add page."

- Give it a name.

- In the URL field, paste the JavaScript code for the bookmarklet. Remember to prefix it with javascript:.

Using the Bookmarklet:

- To use the bookmarklet, simply click on it in the bookmarks bar.

- It will run on the current web page.

Remember to minify the JavaScript code and remove any comments before adding it to the URL field. Enjoy your new bookmarklet!

PermalinkHarnessing Google Chrome's Headless Mode for Website Screenshots

Yet Another Tool for QA

As a seasoned Quality Assurance (QA) professional, I've witnessed the evolution of numerous tools that have streamlined our testing processes. Today, I'm excited to share insights on a powerful feature of Google Chrome that is often underutilized in QA testing: the headless mode.

What is Headless Mode?

Headless mode is a feature available in Google Chrome that allows you to run the browser without the user interface. This means you can perform all the usual browser tasks from the command line, which is incredibly useful for automated testing.

Why Use Headless Chrome for Screenshots?

Taking screenshots is a fundamental part of QA testing. They help us:

- Verify Layouts: Ensure that web pages render correctly across different browser sizes.

- Perform Image Comparisons: Detect any deviations from a base image, which could indicate unexpected changes or errors.

How to Take Screenshots with Headless Chrome

Using Google Chrome's headless mode to capture screenshots is straightforward. Here's a quick guide:

- Open the Command Line: Access your command prompt or terminal.

- Run Chrome in Headless Mode: Use the command

google-chrome --headless --disable-gpu --screenshot --url=[your-website-url]. - Specify the Output File: By default, Chrome saves the screenshot as

screenshot.pngin the current directory. You can specify a different path if needed. - Customize the Browser Size: Use the

--window-sizeoption to set the dimensions of the browser window, like so:--window-size=width,height.

Practical Example

Let's say we want to take a screenshot of example.com at a resolution of 1280x720 pixels. The command would be:

google-chrome --headless --disable-gpu --screenshot --window-size=1280,720 --url=http://www.example.com/After running this command, you'll find screenshot.png in your current directory, capturing the website as it would appear in a 1280x720 window.

Another Example:

This example adds some timestamp to the filename:

cd ~/Desktop;

alias chrome="/Applications/Google Chrome.app/Contents/MacOS/Google Chrome";

chrome --headless --timeout=5000 --screenshot="Prod-desktop.png_$(date +%Y-%m-%d_%H-%M-%S).png" --window-size=1315,4030 https://www.cryan.com/;

Conclusion

Headless Chrome is a versatile tool that can significantly enhance your QA testing capabilities. Whether you're conducting routine checks or setting up complex automated tests, the ability to capture screenshots from the command line is an invaluable asset.

Stay tuned for more QA insights and tips in our upcoming posts! Next week we'll cover using Opera browser for QA testing.

PermalinkValidating CSS Selectors in Chrome Console

Tips and Tricks on CSS Selectors

As Quality Assurance professionals, we know that meticulous testing is the backbone of delivering high-quality software. One crucial aspect of our work involves validating CSS selectors to ensure they accurately target the desired elements on a web page. In this blog post, we'll explore how to validate CSS selectors using the Google Chrome Dev Console.

Why Validate CSS Selectors?

CSS selectors allow us to create triggers for various actions, such as tracking clicks, form submissions, or interactions with specific UI elements. However, relying on selectors without proper validation can lead to unexpected behavior or false positives. Let's dive into the process of validating selectors step by step.

Step 1: Inspect the Element

- Right-Click and Inspect:

- Begin by right-clicking on the element you want to examine (e.g., a button).

- Select "Inspect" from the context menu.

- The Elements panel will open, displaying the HTML and CSS related to the selected element.

Step 2: Access the Chrome Dev Console

- Open the Dev Console:

- If you already have the Dev Console open, click on the "Console" tab (located to the right of the "Elements" tab).

- If not, open the Dev Console by clicking the three dots in the top-right corner of your Chrome browser, then selecting "More Tools" and "Developer Tools".

Step 3: Evaluate the Selector

- Type in the Console:

- In the Console, type the following line:

$$('.your-selector') - Replace '.your-selector' with the actual CSS selector you want to test.

- For example, if you're checking how many buttons on the page have the class "button", use:

$$('.button')

- In the Console, type the following line:

- View the Results:

- The Console will display a NodeList with the matching elements.

- If there are multiple elements with the same selector, you'll see a count (e.g., "(2)").

- Expand the NodeList to see all the elements that match your selector.

- Hover over each one to highlight it on the page.

Step 4: Refine Your Selector

- Be More Specific:

- To narrow down to a specific element, be more specific in your selector.

- For instance, to target only buttons with class "button" within a specific div, adjust your selector accordingly.

Conclusion

Validating CSS selectors using the Chrome Dev Console ensures that our triggers and tracking accurately reflect the intended behavior. Remember to test thoroughly, be specific, and avoid assumptions about uniqueness. Happy testing!

PermalinkWhat is a Bug?

Unraveling the Mysteries of Software Glitches

When Code Misbehaves: A Journey into the World of Bugs

As software developers, we've all encountered them?the elusive, pesky creatures that lurk within our code, wreaking havoc and causing sleepless nights. Yes, I'm talking about bugs. But what exactly is a bug? Let's dive into the fascinating world of software glitches and unravel their secrets.

The High-Level View

James Bach, a testing guru, succinctly defines a bug as "Anything that threatens the value of the product. Something that bugs someone whose opinion matters." It's a poetic take on the subject, emphasizing the impact of these tiny gremlins on our digital creations. But let's break it down further.

The Multifaceted Bug

The Unexpected Defect: A bug refers to a defect?a deviation from the intended behavior. When your code misbehaves, it's like a rebellious child refusing to follow the rules. Maybe that button doesn't submit the form, or the login screen greets users with a cryptic error message. These deviations threaten the product's integrity.

Logical Errors: Bugs often stem from logical errors. Imagine a calculator app that adds instead of subtracts. That's a bug. It's like your calculator suddenly deciding that 2 + 2 equals 5. Logical errors cause our code to break, leading to unexpected outcomes.

The Imperfection Quirk: Bugs aren't always catastrophic. Sometimes they're subtle imperfections?a misaligned button, a typo in an error message, or a pixel out of place. These quirks don't crash the system, but they irk perfectionists (and rightly so).

Microbial Intruders: Bugs can be like microscopic pathogens. In the software realm, they take the form of microorganisms?viruses, bacteria, and other nasties. A bug might crash your app, freeze your screen, or make your cursor jittery. These digital microbes cause illness in our codebase.

The Listening Device: Bugs can be sneaky spies. Imagine a concealed listening device planted in your app. It doesn't transmit classified secrets, but it does eavesdrop on your code's conversations. These bugs?our digital moles?keep an eye on things.

Sudden Enthusiasm: Bugs strike with fervor. One moment, your app hums along peacefully; the next, it's throwing tantrums. It's like your code caught a sudden enthusiasm bug. "I shall crash now!" it declares, leaving you bewildered.

The Bug Whisperer: QA testers are bug whisperers. They coax bugs out of hiding, reproduce their mischievous behavior, and document their antics. It's a delicate dance?the tester and the bug, waltzing through test cases.

The Bug-Hunting Lifestyle

So, what's life like for bug hunters? Picture this:

Late Nights: Bug hunters burn the midnight oil. They chase bugs through tangled code, armed with magnifying glasses (metaphorical ones, of course).

Edge Cases: While others sip coffee, bug hunters ponder the weirdest scenarios. "What if the user clicks 'Submit' while standing on one leg during a solar eclipse?" They explore edge cases with the zeal of detectives solving cryptic crosswords.

Bug Reports: Bug hunters file reports like seasoned journalists. "Dear Devs, I spotted a pixel hiccup in the checkout button. Please investigate." Their bug reports are a mix of Sherlock Holmes and Hemingway.

In Conclusion

Next time you encounter a bug, remember that it's not laziness?it's the QA mode of your code. Bugs keep us humble, teach us resilience, and remind us that perfection is a mirage. So, embrace the bugs, my fellow developers. They're the spice in our digital stew, the glitches that make our world interesting.

And when someone accuses you of being lazy, just smile and say, "No, my friend, I'm just in QA mode."

PermalinkThe more you test something...

Testing Keeps Finding Bugs

In the realm of Quality Assurance (QA), there lies a fundamental truth encapsulated by the quote, "The more you test something, the more you'll find something." This statement is more than just a cautionary tale; it's a principle that guides the relentless pursuit of excellence and reliability in software development. As a seasoned QA professional with a decade of experience under my belt, I've seen firsthand how this principle plays out in real-world testing scenarios. In this week's blog, we'll dive deep into the implications of this quote, exploring its significance and how it shapes our approach to QA.

Uncovering the Layers

At its core, the quote speaks to the iterative nature of testing. With each test cycle, we peel away layers, uncovering not just bugs but also insights into the software's behavior under various conditions. This process is not merely about finding faults; it's about understanding the product more deeply. The complexity of modern software systems means that no matter how comprehensive the initial tests are, subsequent testing is almost guaranteed to reveal something new.

The Paradox of Testing

There's an inherent paradox in QA that this quote subtly highlights: the goal of achieving a bug-free product is both the driving force and the unreachable star of software testing. Each bug found and fixed brings us closer to this ideal, yet the very act of testing uncovers more areas to explore, more scenarios to consider, and potentially, more bugs to fix. This is not a discouragement but a recognition of the dynamic nature of software and its interaction with an ever-changing world.

Quality as a Continuous Journey

This quote underscores the philosophy that quality is not a destination but a continuous journey. In the landscape of QA, complacency is the enemy. The belief that a product is free of defects after a round of testing is a fallacy. Instead, we must adopt a mindset of continuous improvement, where each testing cycle is an opportunity to learn, adapt, and enhance the software.

Strategic Depth in Testing

Understanding that more testing leads to more discoveries, we must strategize our testing efforts to maximize efficiency and effectiveness. This means prioritizing test cases based on risk, employing automated testing for repetitive tasks, and leveraging exploratory testing to uncover the unknown. It's about smart testing, not just more testing. By focusing on critical areas and incorporating feedback loops, we ensure that our testing efforts are both deep and broad, covering as much ground as possible.

Embracing the Discoveries

Finally, this quote invites us to embrace the discoveries made during testing with a positive mindset. Each finding, whether it's a bug, a usability issue, or a performance bottleneck, is an opportunity to improve. It's a testament to the thoroughness of our testing efforts and our commitment to quality. These discoveries should be celebrated as milestones on the road to excellence, not merely seen as obstacles.

Conclusion

"The more you test something, the more you'll find something" is not just a statement about the inevitability of finding bugs; it's a call to action for QA professionals. It challenges us to dig deeper, to look closer, and to never settle for "good enough." As we continue to navigate the complex and ever-evolving landscape of software development, let this principle guide us in our unending quest for quality. Let us embrace the iterative nature of testing, the strategic depth of our efforts, and the positive impact of our discoveries. After all, in the world of QA, every bug found is a step closer to a better product.

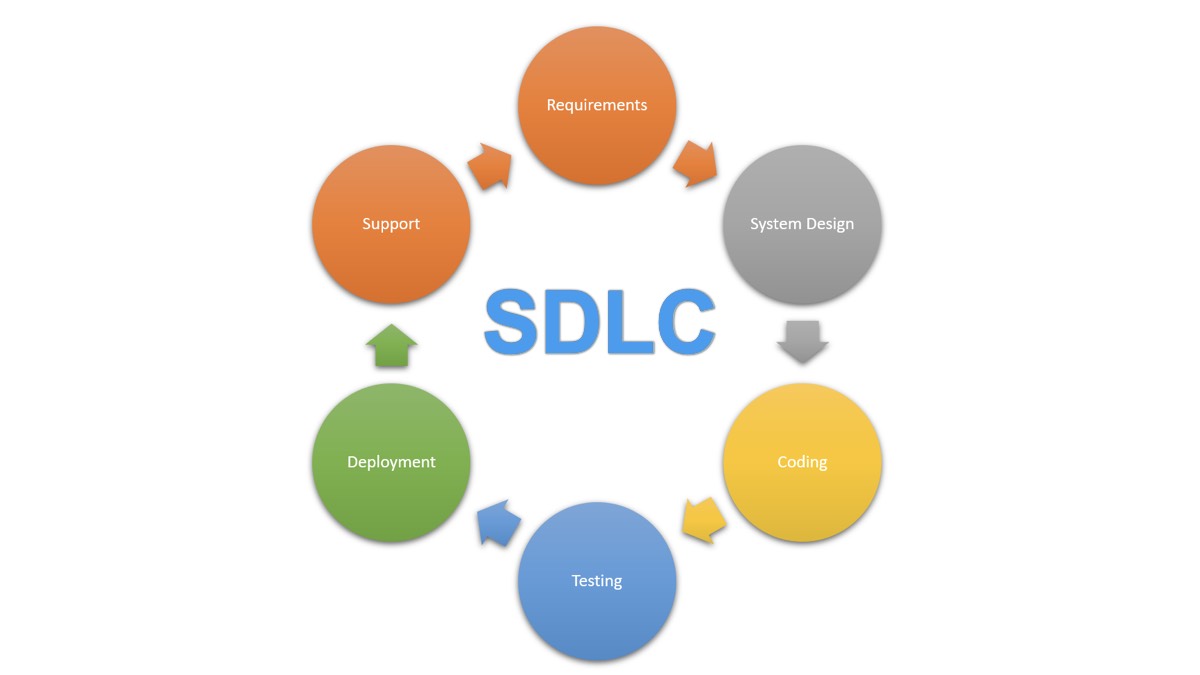

PermalinkSoftware Development Lifecycle

QA Practices in a Hybrid Model

As a seasoned Quality Assurance (QA) professional, I've witnessed the evolution of software development practices over the years. The SDLC (Software Development Lifecycle) remains at the core of successful software projects, guiding teams through planning, development, testing, deployment, and maintenance. In this week's QA blog, let's delve into the essential phases of the SDLC, explore various methodologies, and discuss how QA practices fit seamlessly into a hybrid model.

What is the SDLC?

The SDLC is a structured process that enables development teams to build high-quality software efficiently. It encompasses several phases, each with specific activities and deliverables:

- Requirements Gathering and Analysis:

- Business analysts collaborate with stakeholders to define and document software requirements.

- QA's role: QA professionals actively participate in requirement reviews, ensuring clarity, completeness, and measurability. We validate that requirements align with quality standards and compliance.

- System Design:

- Software architects translate requirements into a high-level design.

- QA's role: QA experts review design documents, focusing on testability, scalability, and security aspects. We identify potential risks and suggest improvements.

- Coding:

- Developers write code based on the system design.

- QA's role: QA engineers can contribute by reviewing code quality, adherence to coding standards, and identifying potential defects early.

- Testing:

- The software undergoes rigorous testing to uncover bugs and ensure it meets requirements.

- QA's role: QA testers design test cases, execute functional, regression, and performance tests. We collaborate closely with developers to address issues promptly.

- Deployment:

- The software is released to the production environment.

- QA's role: QA participates in smoke testing, ensuring the deployment process is smooth. We verify that the software functions correctly in the live environment.

- Maintenance and Support:

- Ongoing activities include user training, monitoring performance, and fixing defects.

- QA's role: We continue to validate enhancements, monitor performance metrics, and address any post-deployment issues.

SDLC Methodologies

Various SDLC methodologies exist, each with its strengths and weaknesses. Let's explore a few:

- Waterfall Model:

- Sequential approach with distinct phases.

- QA's role: QA teams perform comprehensive testing at each stage, ensuring quality gates are met before progressing.

- Agile Model:

- Iterative and incremental development.

- QA's role: QA actively participates in sprint planning, executes tests during each sprint, and provides continuous feedback.

- Spiral Model:

- Combines iterative development with risk analysis.

- QA's role: QA assesses risks, validates prototypes, and ensures risk mitigation strategies are effective.

- Hybrid Model:

- A pragmatic blend of waterfall and agile approaches.

- QA's role: In the hybrid model, QA practices adapt to the specific phase. During the initial requirements and design phase, QA focuses on risk analysis and validation. As the project transitions to agile sprints, QA actively participates in testing and deployment.

QA Practices in a Hybrid Model

In a hybrid model, QA professionals play a pivotal role:

- Requirements Phase:

- QA ensures that requirements are clear, testable, and aligned with quality standards.

- Risk analysis helps prioritize testing efforts.

- Design Phase:

- QA reviews design documents, emphasizing testability and security.

- Early identification of potential issues prevents rework.

- Agile Sprints:

- QA participates in sprint planning, executes functional and regression tests, and collaborates with developers.

- Continuous feedback ensures quality throughout.

- Deployment and Maintenance:

- QA verifies successful deployment and monitors post-release performance.

- Ongoing testing maintains software quality.

Conclusion

The SDLC, combined with the right methodology, empowers development teams to create exceptional software. In a hybrid model, QA practices adapt dynamically, ensuring quality remains at the forefront. As QA professionals, our ability to navigate these phases and methodologies contributes significantly to successful software delivery.

PermalinkWhy You Shouldn't Use Excel as a Test Case Repository

Five Good Reasons to Move Away from Excell

In the world of software testing, the organization and management of test cases are critical to ensuring the quality and reliability of software products. While Microsoft Excel has long been a go-to tool for various data management tasks, including some aspects of Quality Assurance (QA) processes, it's far from the ideal solution for managing test cases. With a decade of experience in QA, I've seen firsthand the pitfalls of relying on Excel as a Test Case Repository. Here are the top five reasons why you should reconsider using Excel for this crucial aspect of software testing.

1. Lack of Real-Time Collaboration

In today's fast-paced and often remote working environments, real-time collaboration is key. Excel, especially when used as a standalone file without integration into cloud services like Office 365, severely limits the ability of teams to work together in real-time. This can lead to issues such as version conflicts, overwritten data, and a general bottleneck in the testing process, as team members cannot simultaneously view or edit the test cases effectively.

2. Scalability Issues

As projects grow, so do their testing requirements. Excel is not designed to efficiently handle large datasets or complex relationships between data. Test case repositories can quickly become unwieldy in Excel, with thousands of rows and numerous columns becoming difficult to navigate, update, and maintain. This can lead to decreased productivity and an increased risk of errors.

3. Inadequate Reporting and Analytics

Effective testing requires robust reporting and analytics capabilities to track test progress, coverage, and outcomes. Excel's capabilities in this area are limited, requiring manual setup and updates to reports. It lacks the dynamic reporting features found in dedicated test management tools, which can automatically generate detailed reports and analytics, providing insights into the testing process and helping to identify areas of concern quickly.

4. Risk of Data Loss and Corruption

Excel files are prone to corruption, especially as file sizes increase or when shared across multiple users. There's also a significant risk of data loss, whether from accidental deletion or the lack of a robust version control system. Unlike specialized test case management tools that offer detailed audit trails and data backup mechanisms, Excel leaves your critical testing data vulnerable.

5. No Integration with Other Testing Tools

Modern QA processes often involve a suite of testing tools, including issue tracking systems, automation tools, and continuous integration/continuous deployment (CI/CD) pipelines. Excel lacks the ability to integrate natively with these tools, making it challenging to establish a seamless testing workflow. This lack of integration can lead to inefficiencies and errors as data must be manually transferred between systems, increasing the workload on QA teams and the potential for mistakes.

Conclusion

While Excel might seem like a convenient and familiar tool for managing test cases, its limitations become apparent as testing requirements grow in complexity and scale. The lack of real-time collaboration, scalability issues, inadequate reporting, risk of data loss, and lack of integration with other testing tools make Excel a suboptimal choice for managing test cases. Investing in a dedicated test case management tool can significantly enhance the efficiency, reliability, and effectiveness of your QA processes, leading to higher quality software and happier teams. As we move forward in the ever-evolving landscape of software development, it's crucial to utilize tools that are specifically designed for the challenges and needs of modern QA practices.

PermalinkHeuristics in QA

Practicality Over Perfection

Welcome back to our weekly QA blog, where we delve into the intriguing world of quality assurance and software testing. This week, we're exploring the concept of heuristics, a term that's become increasingly relevant in our field.

Understanding Heuristics

In the realm of software testing, heuristics are often described as "fallible methods of solving a problem or making a decision." This definition, coined by esteemed QA experts Cem Kaner and James Bach, highlights the inherent uncertainty and flexibility within heuristics.

Karen Johnson further elucidates this concept, defining heuristics as "experience-based techniques for problem solving, learning, and discovery." In the often unpredictable and complex landscape of software testing, heuristics act as our compass, guiding us through uncharted territories where traditional methods might falter.

Why Heuristics Matter in QA

Practicality Over Perfection: Software testing often involves vast and intricate systems where an exhaustive check is impractical, if not impossible. Heuristics allow testers to use their judgment, experience, and intuition to identify potential problem areas, prioritize testing efforts, and find satisfactory solutions more efficiently.

Adaptability: Heuristics are not rigid rules but adaptable guidelines that can be tweaked based on the context of the project. This adaptability is crucial in a field where no two projects are exactly the same.

Fostering Innovation: By encouraging testers to rely on their insights and experiences, heuristics foster a more creative and investigative approach to testing, often leading to the discovery of unconventional bugs or issues.

Examples of Heuristics in Software Testing

- Error Guessing: This involves anticipating where errors might occur based on past experiences or common failure patterns.

- Boundary Value Analysis: A heuristic technique where tests are designed around the boundaries of input ranges, where errors are more likely to occur.

- Risk-Based Testing: Prioritizing testing efforts based on the risk and impact of potential failures.

Applying Heuristics: A Real-World Approach

- Start with the Known: Leverage your past experiences and knowledge about similar projects or systems to guide your initial testing efforts.

- Embrace Exploration: Allow yourself the freedom to explore the software without strict boundaries. Sometimes the most insightful discoveries are made serendipitously.

- Collaborate and Learn: Share insights and experiences with your team. Heuristics thrive in environments where knowledge and ideas are freely exchanged.

- Iterate and Adapt: Be prepared to change your approach as you gather more information about the system under test.

The Balance Between Heuristics and Structured Testing

While heuristics play a critical role in QA, they should complement, not replace, structured testing methodologies. A balanced approach, where structured tests provide coverage and heuristics guide exploratory efforts, often yields the best results.

Conclusion

Heuristics are a testament to the art and science of software testing. They remind us that, in our quest for quality, our experience, intuition, and judgment are as valuable as our technical skills. As we continue to navigate the evolving landscapes of software projects, let us embrace heuristics as tools for learning, discovery, and problem-solving.

Stay tuned for more insights and discussions in our upcoming blogs. Happy testing!

PermalinkNo Such Thing As Free Lunch in QA

Don't Cut Corners in QA

As the saying goes, "there's no such thing as a free lunch". This statement is especially true in the world of software quality assurance testing. Software testing is a crucial component of the software development process, ensuring that the final product meets the desired quality standards and user expectations. However, there are costs associated with software testing that cannot be ignored, and attempts to cut corners in this area can lead to significant problems down the road.

One of the most significant costs of software testing is time. Thorough testing takes time, and rushing the testing process to save time can result in missed bugs and defects. These defects can have severe consequences, such as security vulnerabilities or loss of data. Fixing these problems can cost far more time and money than the time saved by rushing the testing process.

Another cost associated with software testing is resources. To ensure thorough testing, you need the right people with the right skills, tools, and resources to do the job. Attempting to cut corners by using unskilled or inexperienced testers, inadequate tools or insufficient resources can lead to unreliable testing results and missed defects.

In addition to the direct costs of software testing, there are also indirect costs to consider. These include the costs of rework, delays in release schedules, and damage to reputation. If a product is released with known defects or quality issues, it can negatively impact the reputation of the company and its products, leading to lost business and revenue.

Therefore, it's essential to remember that there is no such thing as a free lunch when it comes to software testing. Attempting to cut corners and save costs in this area can lead to significant problems down the road. Instead, it's crucial to invest in the right resources, people, and tools for quality assurance testing to ensure the product meets the desired quality standards and user expectations.

In conclusion, software quality assurance testing is a critical component of the software development process. Cutting corners in this area to save time or money is a risky strategy that can lead to significant problems down the road. It's essential to invest in the right resources, people, and tools for software testing to ensure the final product meets the desired quality standards and user expectations. Remember, there's no such thing as a free lunch, and attempting to take shortcuts in software testing can be costly in the long run.

PermalinkWinter Rules Are In Effect

Just like Golf

Just like in golf, where winter rules adapt the game to colder, more challenging conditions, the world of Software Quality Assurance (QA) often requires its own set of "winter rules" to navigate the complex landscape of testing during the colder months. Let's tee off and explore some essential tips and strategies to keep in mind for QA this winter.

1. Embrace AI and Machine Learning: Your Caddies in the Snow With the landscape of software development continually evolving, AI and machine learning have become crucial allies, much like a caddy in a snow-covered golf course. They assist in automating test case generation and optimizing test coverage, ensuring that your QA process doesn't freeze over in the winter chill. Like a caddy predicting a tricky terrain, AI algorithms can predict potential defects, making your testing process more efficient.

2. Continuous Testing: Keeping the Game Moving Just as a golfer wouldn't stop after a single hole, continuous testing ensures that QA is an ongoing process. With the adoption of DevOps and CI/CD practices, tests are automated throughout the development pipeline. This approach helps to keep the game moving, identifying issues early and reducing the time and cost associated with fixing bugs.

3. Shift-Left Testing: Clearing the Snow Early In golf, it's always best to avoid hazards, and in QA, shift-left testing does just that. By moving testing activities earlier in the development cycle, defects are caught at their source. Think of it as clearing the snow from the fairway early on, ensuring a smoother play throughout.

4. Quantum Computing Testing: New Clubs in the Bag Quantum computing is like a new set of golf clubs ? it offers great potential but requires a different approach. The unique challenges of quantum software require innovative testing strategies. This winter, be prepared to add these new "clubs" to your QA "golf bag."

5. IoT and Embedded Systems Testing: Playing on a New Course Testing IoT devices is akin to playing on a new, unfamiliar golf course. It demands understanding the unique challenges of diverse environments and ensuring secure communication, much like adapting to different course layouts and hazards.

6. Data Quality: The Scorecard of Your Game In QA, data quality is your scorecard. It's vital to ensure that the data is accurate, complete, and reliable. This winter, focus on maintaining a clean scorecard by integrating comprehensive data quality checks into your QA process.

7. Mobile Testing: Navigating the Bunkers Mobile app testing is as crucial as navigating the bunkers in golf. It's about ensuring that the apps perform well, are user-friendly, and are secure across various devices ? much like a golfer mastering different bunker shots.

8. Cybersecurity: Guarding Against the Unexpected Hazards Cybersecurity in QA is like being prepared for unexpected hazards on a golf course. Regular security testing, including ethical hacking and penetration testing, ensures that your software is fortified against unforeseen risks.

9. Exploratory Testing: The Creative Play Finally, exploratory testing in QA is the equivalent of a creative play in golf. It's about using intuition and experience to explore and identify issues that scripted testing might miss ? much like a golfer taking a create shot for the green despite challenging conditions.

Conclusion: Keeping Your Game Sharp in the QA Winter As the winter sets in, it's crucial for QA professionals to adapt their strategies, much like golfers adjust their game to the seasonal changes. By embracing these winter rules ? from leveraging AI and ML, engaging in continuous and shift-left testing, to focusing on data quality and cybersecurity ? you can ensure that your software testing process remains robust and efficient, even in the frostiest of conditions.

Remember, in the world of software QA, every season brings its own challenges and opportunities. This winter, stay ahead of the game by being adaptable, innovative, and vigilant. Happy testing, and may your metaphorical drives land on the fairway and your code be bug-free!

PermalinkAbout

Weekly Tips and tricks for Quality Assurance engineers and managers. All reviews are unbiased and are based on personal use. No money or services were exchanged for the reviews posted.

Check out all the blog posts.

Schedule

| Friday | Macintosh |

| Saturday | Internet Tools |

| Sunday | Open Topic |

| Monday | Media Monday |

| Tuesday | QA |

| Wednesday | New England |

| Thursday | Gluten Free |

Other Posts

- Unhelpful Error Messages

- When Not to Automate

- November Graphics

- Copy CSS Selector

- QA Video

- Design By Contract

- Fixed some Classic Posts

- QA Graphics

- Release Canaries

- Browser Cookie Size

- QA up at Night

- Blue Button

- What Wikipedia Can Not Tell You About Test Link

- Undernourished Simpsons Proposition

- Ask QA: Should You Always Report Bugs?